Build a Procedural Datastore

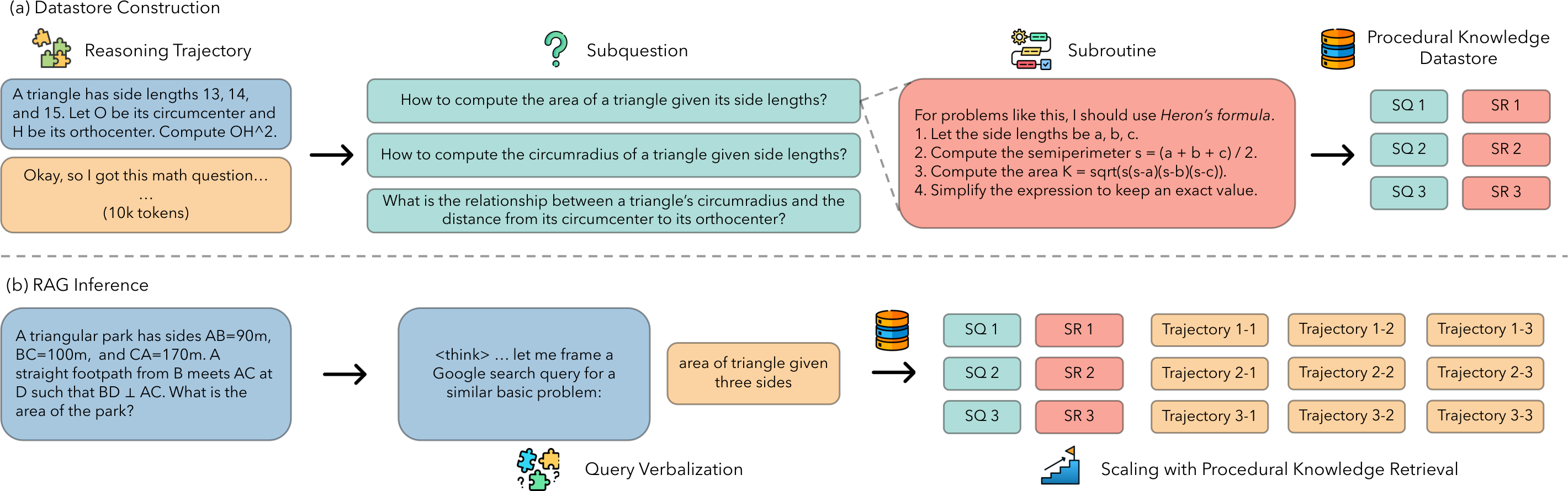

Public reasoning trajectories are decomposed into self-contained subquestions and concise reusable subroutines, yielding about 32 million procedural entries.

Preprint · arXiv:2604.01348 · cs.CL

Reasoning Memory turns existing reasoning trajectories into a large procedural datastore, then retrieves compact subroutines inside a model's thinking stream to improve test-time scaling on math, science, and coding tasks.

University of California, Los Angeles · Meta FAIR · * Work done while at Meta FAIR

Framework

Motivation

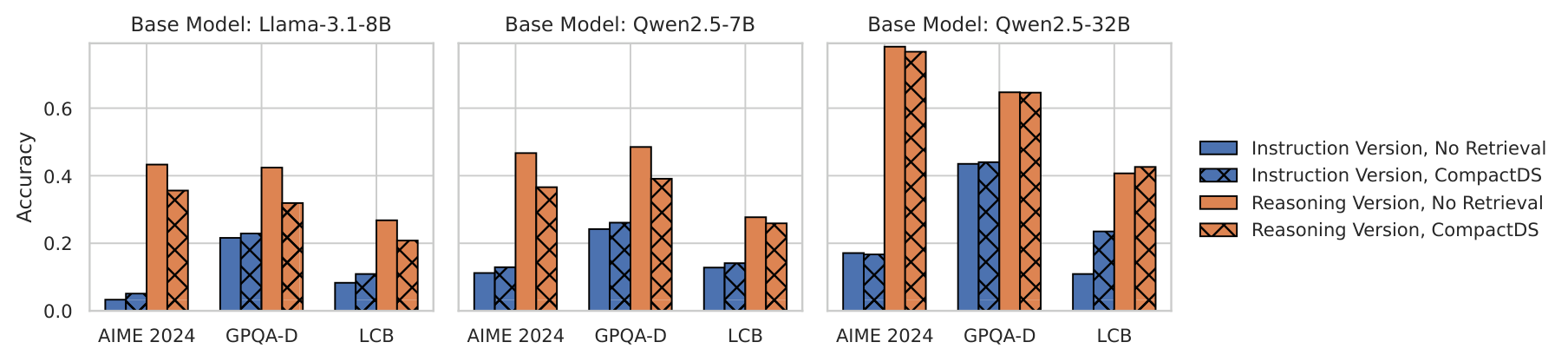

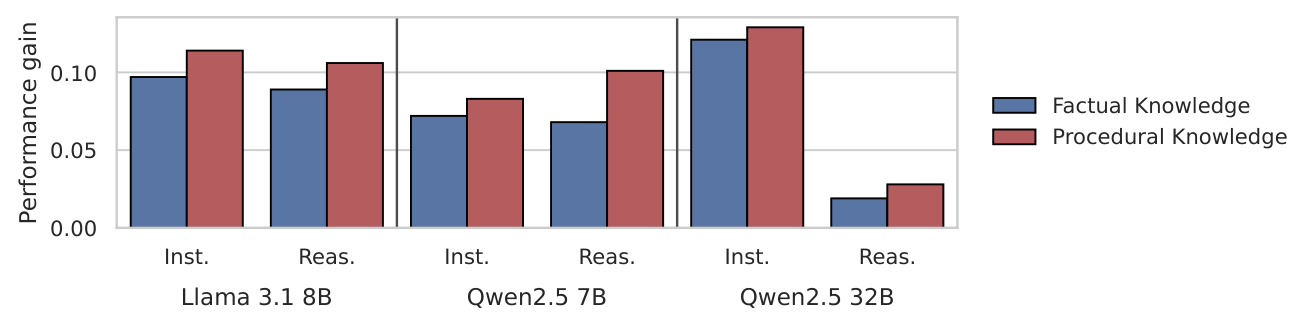

The paper first tests a standard document-level RAG pipeline on paired instruction-tuned and reasoning models. Retrieved documents help instruction-tuned models more reliably, but reasoning models often receive limited or negative gains. A controlled knowledge-injection study then shows that procedural guidance is the more useful form of retrieved context for reasoning models.

Core idea

Standard document RAG often retrieves facts or background passages. Reasoning Memory instead retrieves procedural knowledge: how to reframe a problem, choose an approach, verify progress, and backtrack when needed.

Public reasoning trajectories are decomposed into self-contained subquestions and concise reusable subroutines, yielding about 32 million procedural entries.

A lightweight in-thought prompt makes the model verbalize the current subquestion as a compact query, then retrieves matching procedures with a dense retriever.

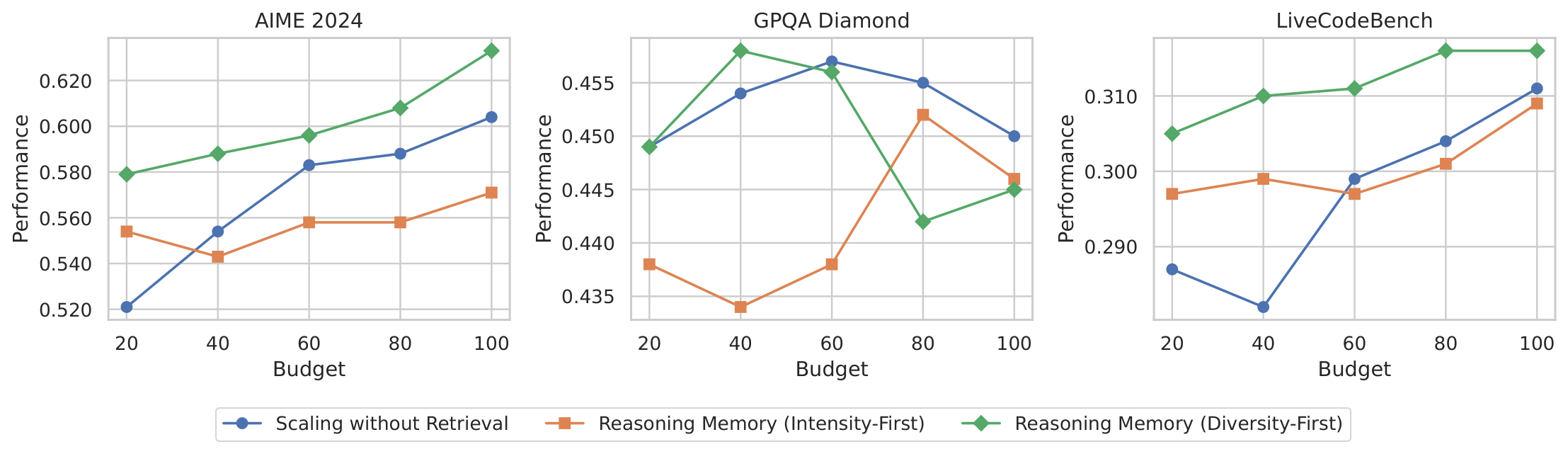

The model samples multiple trajectories under different retrieved subroutines and filters candidates with a simple length-based uncertainty heuristic.

Results

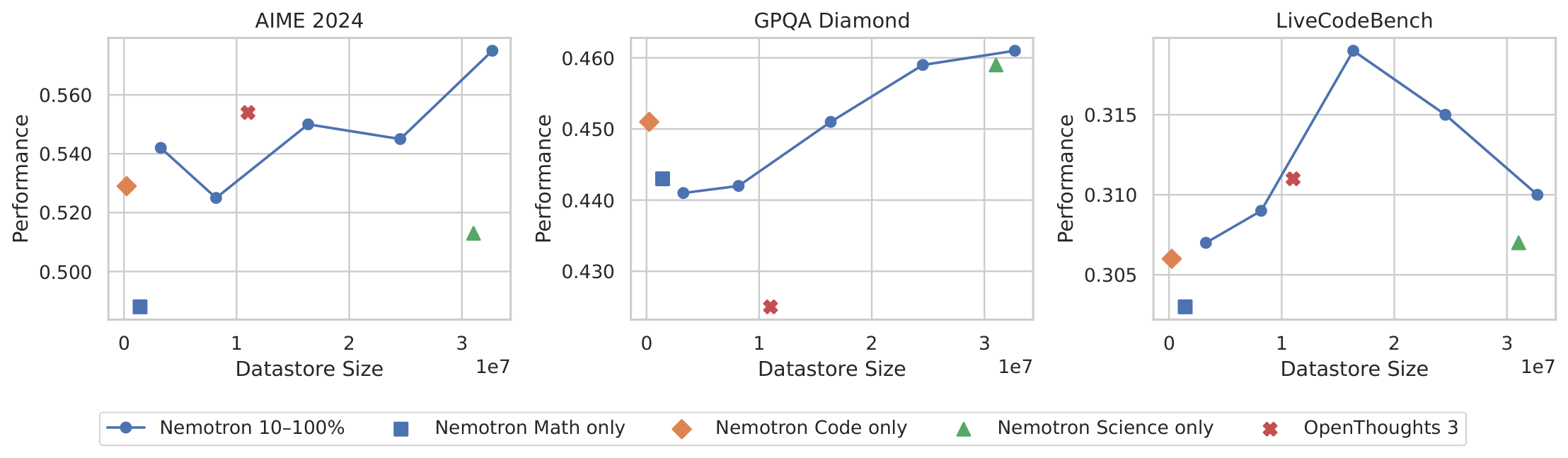

Reasoning Memory is evaluated on AIME 2024/2025, MATH500, GPQA-Diamond, and LiveCodeBench with DeepSeek-R1-Distill-Llama-8B, OpenThinker3-7B, and Qwen3-32B. The table below compares Reasoning Memory against the retrieval-free length-scaling baseline at the higher budget setting.

| Model | Method | AIME 2024 | AIME 2025 | MATH500 | GPQA-D | LCB V1-4 | LCB V5-6 |

|---|---|---|---|---|---|---|---|

| DeepSeek-R1-Distill-Llama-8B | Length Scaling | 0.548 | 0.358 | 0.802 | 0.447 | 0.282 | 0.302 |

| Reasoning Memory | 0.575 | 0.392 | 0.836 | 0.461 | 0.310 | 0.325 | |

| OpenThinker3-7B | Length Scaling | 0.647 | 0.528 | 0.873 | 0.502 | 0.345 | 0.343 |

| Reasoning Memory | 0.758 | 0.679 | 0.911 | 0.542 | 0.381 | 0.412 | |

| Qwen3-32B | Length Scaling | 0.812 | 0.619 | 0.908 | 0.682 | 0.405 | 0.476 |

| Reasoning Memory | 0.838 | 0.754 | 0.923 | 0.681 | 0.471 | 0.508 |

Higher is better. Values are accuracy or pass@1 depending on the benchmark. See the paper for all baselines, budgets, and significance tests.

Analysis

Qualitative example

The model generates a short query that reflects its current subproblem, retrieves a matching procedural hint, and continues reasoning under that hint without needing to copy it verbatim.

Find the sum of all integer bases b > 9 for which 17b is a divisor of 97b.

"17 in base b is equal to what in decimal?"

How do you convert a number from an arbitrary base b to its decimal equivalent?

Write each digit times the corresponding power of the base, sum the terms, and first check that all digits are valid in the base.

Citation

@article{wu2026procedural,

title={Procedural Knowledge at Scale Improves Reasoning},

author={Wu, Di and Sachan, Devendra Singh and Yih, Wen-tau and Chen, Mingda},

journal={arXiv preprint arXiv:2604.01348},

year={2026},

archivePrefix={arXiv},

eprint={2604.01348},

primaryClass={cs.CL}

}